How Artemis Can Fix the Gaps in Sensory Rehabilitation : A Practical And Scalable Workflow

Mar 12, 2026

This article explains a practical, clinic-friendly workflow for using Artemis (our tactile assessment and retraining platform) to address the most common bottlenecks in sensory rehabilitation (how sensation is re-trained after injury): inconsistent testing (hard to compare visits), manual documentation (hard to track), and unstructured home dosing (hard to get enough reps).

We previously wrote an article on the standard of care for sensory assessment and rehab.

Let’s relook at the challenges:

The problem

Sensory rehab often breaks down because the loop is incomplete:

Testing varies session-to-session (hard to compare results)

Data capture is manual (hard to track)

Training is hard to prescribe and scale (hard to get enough reps)

Artemis closes the loop by:

Producing a diagnostic report (PDF summary) after each test (baseline + follow-ups)

Using that report as the anchor for comparison (track change) and as the input to rehab (report → protocol)

The diagrams below show Artemis as three connected workflows that implement this: two ways to run diagnostic testing, plus an automated rehab protocol driven by the diagnostic report.

From a clinician standpoint, each workflow is designed to solve a day-to-day bottleneck:

Reduce variability (get comparable results across visits/therapists)

Reduce documentation burden (capture once, reuse everywhere)

Increase practical dose (and make it realistic to administer without a ton of manual effort)

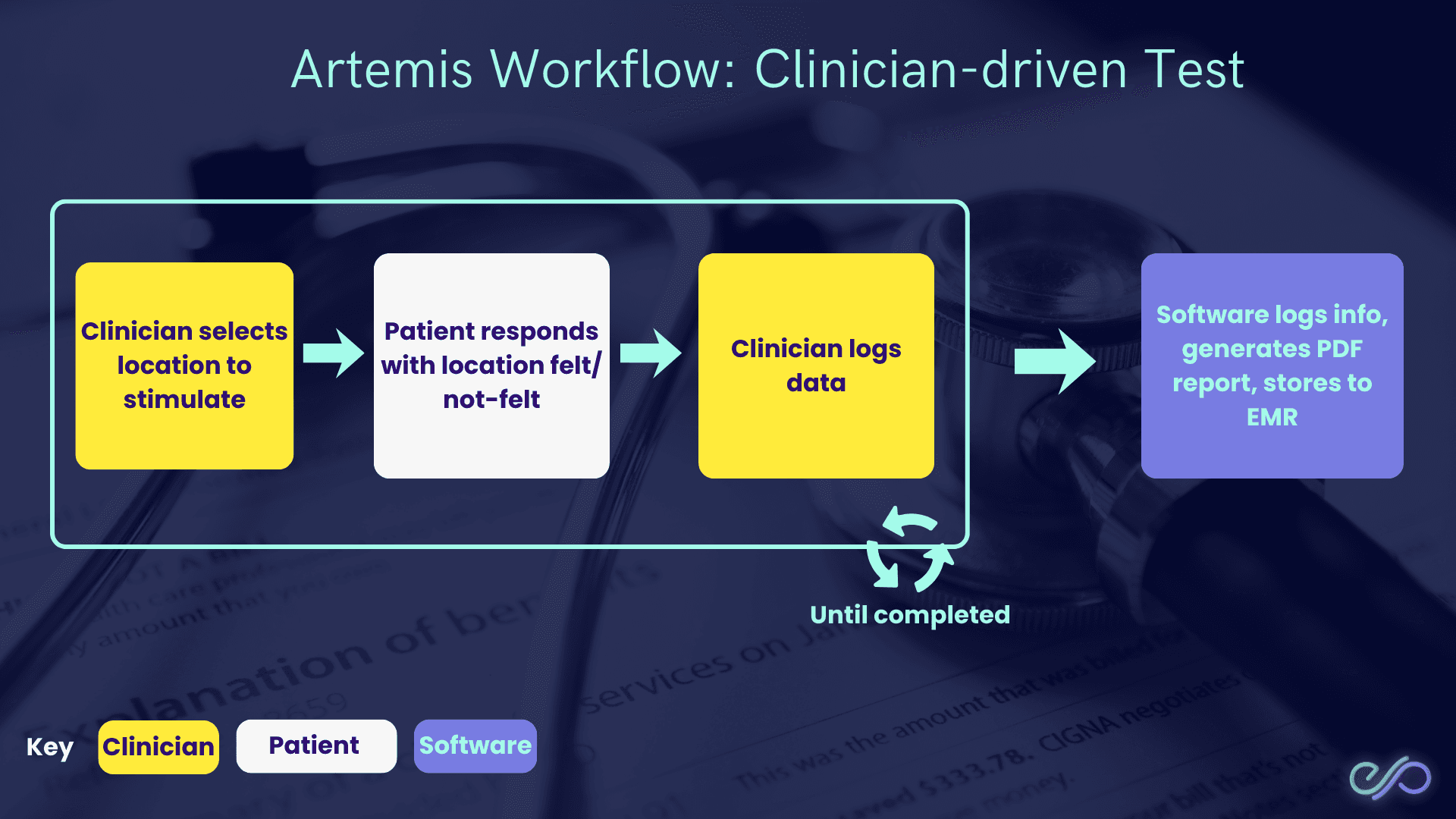

Workflow 1: Clinician-driven test

In a clinician-driven test, the clinician chooses where to stimulate (what to test), while the software handles logging and reporting.

Why this matters:

Matches real clinical reasoning (you can probe specific areas you suspect are impaired).

Keeps the workflow fast in-session (no custom spreadsheets or ad-hoc notes).

Produces consistent documentation (a repeatable PDF report) for baseline and follow-ups.

How it works:

Clinician selects location to stimulate (chooses the test site).

Patient responds with location felt/not-felt (reports perception).

Clinician logs data (records the response).

Software logs info, generates a PDF report, stores to EMR (electronic medical record).

This loop repeats until the test is completed.

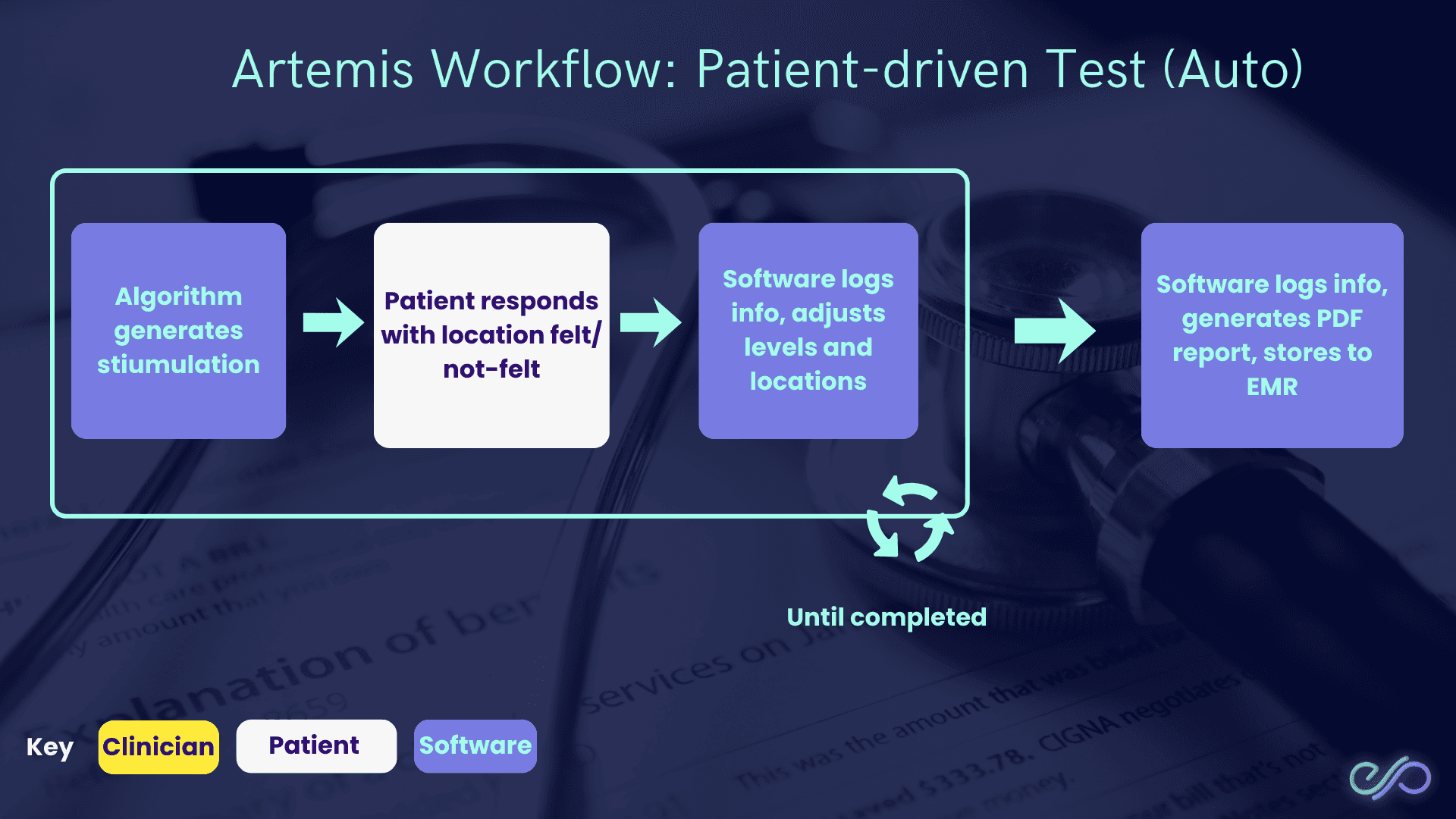

Workflow 2: Patient-driven test (Auto)

In a patient-driven test, the software runs the session end-to-end, with the patient providing responses.

Why this matters:

Reduces clinician time per session (the patient can complete structured trials with minimal supervision).

Improves standardization (the same logic runs each time, reducing protocol drift).

Makes repeat testing feasible (easier to run baselines + regular re-tests).

How it works:

Algorithm generates stimulation (software chooses what to test next).

Patient responds with location felt/not-felt (where they felt it, or didn’t).

Software logs info and adjusts levels + locations (changes intensity/site based on answers).

Software generates a PDF report and stores to EMR (electronic medical record) (clinic record system).

This loop repeats until the test is completed.

Key output:

Diagnostic report (PDF summary) (shareable baseline) that you can use to compare follow-ups and to drive rehab protocol generation (report → training plan).

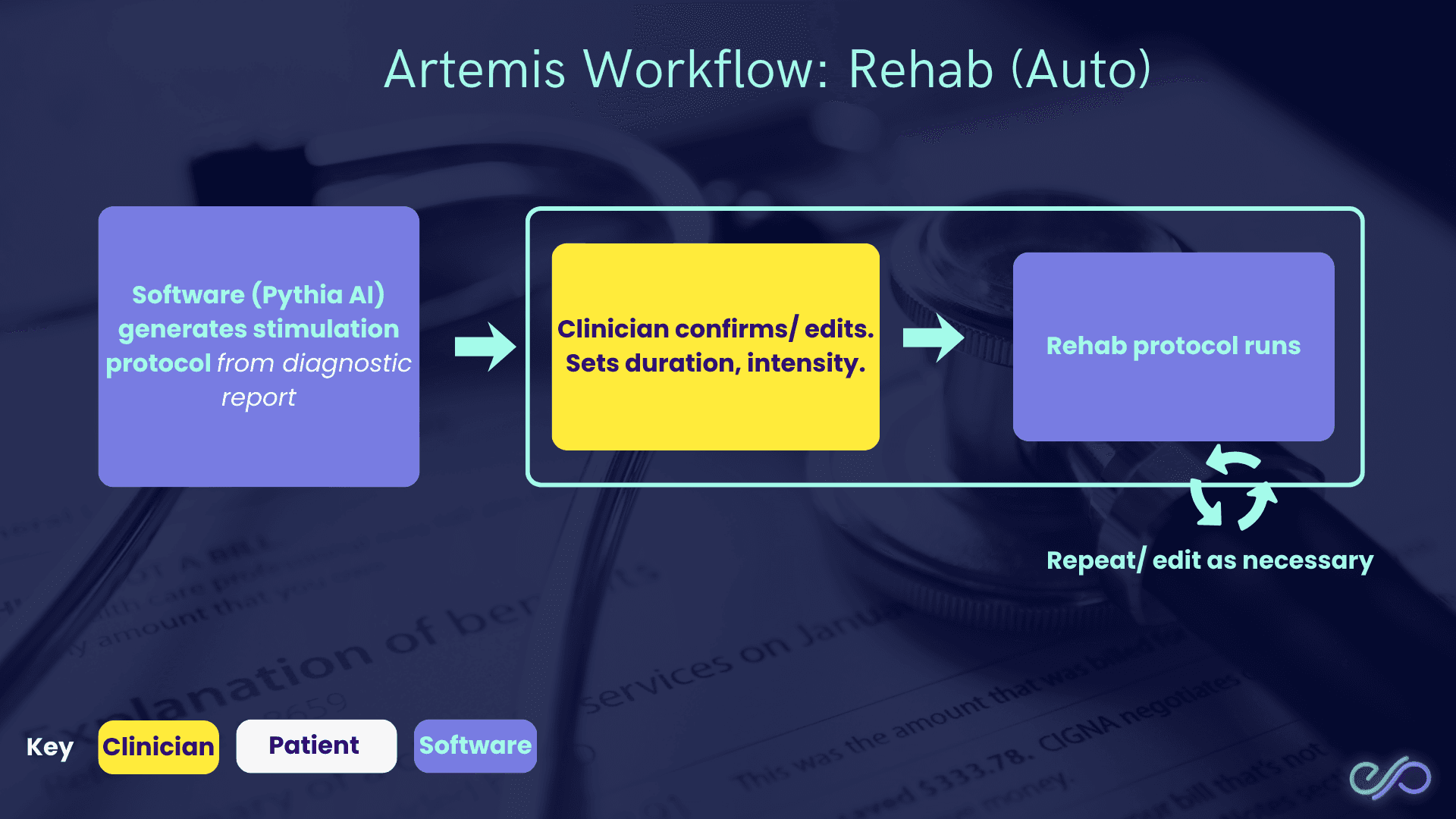

Workflow 3: Rehab (Auto)

The rehab workflow then turns the diagnostic report into a training protocol.

Why this matters:

Closes the gap between “we measured it” and “what do we do about it?” (assessment → action).

Makes dosing more consistent (duration/intensity are explicit, not ad-hoc).

Supports progression over time (you can repeat and edit based on new reports).

How it works:

Software (Pythia AI) generates a stimulation protocol from the diagnostic report (a plan based on measured deficits).

Clinician confirms/edits and sets duration + intensity (how long and how strong).

Rehab protocol runs (patient completes the session).

This loop repeats, with the clinician editing as necessary.

What the three workflows enable

Standardized capture (consistent logging) → easier comparisons over time.

Faster documentation (auto PDF + EMR) → less admin burden.

Clear bridge from assessment to rehab (report → protocol) → easier to prescribe structured home/clinic sessions.

Conclusion

The limitations in sensory assessment show up in daily practice as: limited clinician time, inconsistent protocols, hard-to-interpret measurements, and difficulty delivering enough home practice dose.

Artemis addresses these constraints by closing the loop from assessment → documentation → rehab:

Time/resource constraints: patient-driven testing reduces hands-on clinician minutes; clinician-driven testing keeps targeted exams fast.

Standardization + measurement: software-defined workflows plus a consistent diagnostic report (PDF) make baseline and follow-ups comparable.

Home program delivery: rehab (Auto) turns the diagnostic report into a concrete protocol (duration + intensity) that can be repeated and adjusted.

Sensory rehabilitation still depends on clinical judgment, but Artemis makes the workflow more repeatable (consistent tests), more documentable (structured outputs), and more dose-feasible (more sessions between visits).